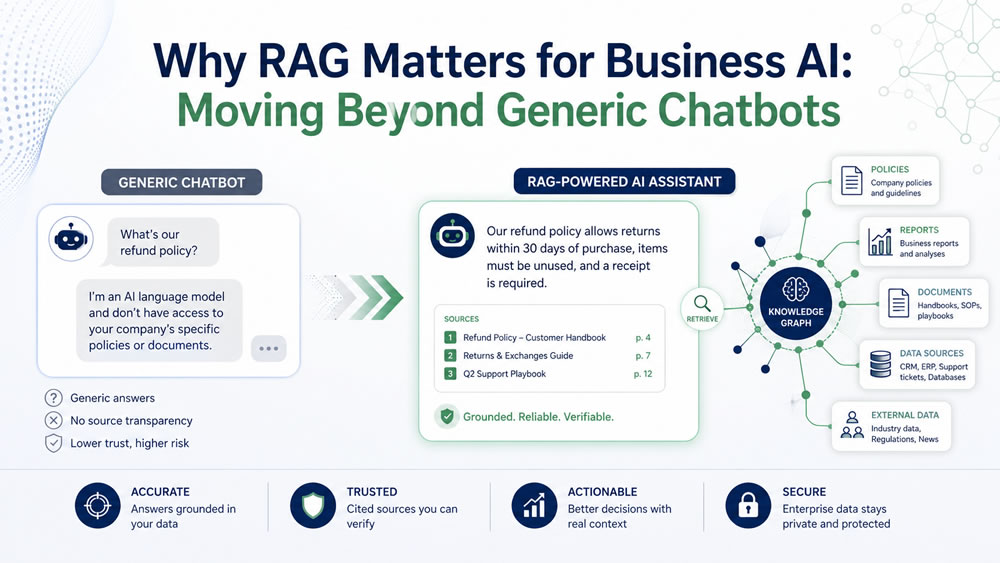

Many organizations begin their AI journey with generic chatbots. Employees ask questions, draft content, summarize ideas, and explore possibilities. This can be useful for productivity and learning. But when organizations want AI to answer business-specific questions, generic chatbots quickly reveal their limits.

A generic AI model does not automatically know your policies, procedures, reports, metrics, contracts, customer history, product documentation, or internal definitions. If it does not have access to trusted organizational knowledge, it may produce answers that sound confident but are incomplete, outdated, or wrong.

This is why RAG matters. RAG stands for Retrieval-Augmented Generation. In plain language, RAG allows an AI system to retrieve relevant information from trusted sources and use that information to generate a better answer. For business AI, RAG is one of the key bridges between generic AI and trusted organizational AI.

The Problem With Generic Chatbots

Generic chatbots are trained on broad patterns from large amounts of data. They are useful for general writing, brainstorming, summarization, and explanation. But they are not automatically grounded in your organization’s current truth.

Ask a generic chatbot, “What is our travel policy?” and it may produce a plausible policy-like answer. But unless it has access to your approved internal policy, it is guessing based on general patterns.

Ask, “Which customer segment is most profitable?” and it cannot know unless it has access to the correct data, definitions, and metrics.

Ask, “What should our support team do when a customer requests an exception?” and it may answer generically unless it can retrieve your actual SOPs, escalation rules, and customer policies.

This creates a business risk: generic answers may appear useful while failing to reflect organizational reality.

What RAG Does

RAG changes the process. Instead of relying only on what the model already knows, a RAG system searches a trusted knowledge base, retrieves relevant content, and gives that content to the AI model as context.

A simplified RAG workflow looks like this:

- A user asks a question.

- The system searches trusted documents or data sources.

- It retrieves relevant passages or records.

- The AI uses those sources to generate an answer.

- The answer may include references or source links.

This does not make AI perfect, but it improves grounding. The answer is more likely to reflect approved internal information.

Why RAG Matters for Business

Business questions often require context. They are not purely general knowledge questions. They depend on internal rules, definitions, history, documents, and decisions. RAG matters because it helps organizations move from generic AI to context-aware AI. Common business uses include:

- policy Q&A

- HR knowledge assistants

- customer support knowledge bases

- compliance document search

- legal and contract review support

- training material synthesis

- research report summarization

- internal SOP guidance

- product documentation assistants

- executive briefing support

In each case, the value comes from grounding AI in the right sources.

RAG Is Not Just PDF Search

Some people think RAG is simply uploading PDFs and asking questions. That is a starting point, but enterprise RAG is more than PDF search. A mature RAG system requires attention to:

- source selection

- document quality

- chunking strategy

- metadata

- embeddings

- retrieval quality

- ranking

- access permissions

- source freshness

- answer validation

- user feedback

- monitoring

If the retrieval step finds the wrong documents, the AI may generate a weak answer. If documents are outdated, the answer may be outdated. If permissions are not respected, the system may reveal sensitive information. If sources conflict, the system may produce confusing output. RAG is not magic. It is an architecture for connecting AI to knowledge.

Knowledge Readiness Comes Before RAG

Before building a RAG system, organizations should assess knowledge readiness. Important questions include:

- Where do our important documents live?

- Which documents are authoritative?

- Which documents are outdated?

- Who owns each knowledge area?

- Are there conflicting versions?

- Are documents structured clearly?

- Do we have metadata?

- What content is sensitive?

- Who should access what?

- How often should sources be updated?

Many RAG projects struggle because organizations try to build AI on top of disorganized knowledge. If the knowledge environment is weak, RAG will expose the weakness.

RAG and Structured Data

RAG is often discussed in relation to documents, but business knowledge is not only unstructured text. It may also include structured data such as customer records, sales tables, operational metrics, financial data, inventory records, and performance dashboards. A strong business AI system may need both:

- unstructured knowledge, such as policies, reports, PDFs, manuals, and notes

- structured data, such as metrics, transactions, tables, and business definitions

For example, a leader may ask, “Why did customer complaints increase last month?” A useful answer may require support tickets, policy changes, staffing data, customer segments, and operational metrics. This is where RAG connects to broader AI-ready data foundations. The organization needs trusted metrics, semantic definitions, and governed data access, not only document retrieval.

RAG and Trust

Trust is one of the most important reasons RAG matters. In business settings, users need to know where an answer came from. They need to check the source. They need to distinguish between retrieved evidence and AI-generated interpretation. A good RAG system should help users answer:

- What sources were used?

- Are those sources approved?

- How recent are they?

- What part of the answer is directly supported?

- What part is interpretation?

- What should be verified before action?

This is especially important for leadership, HR, compliance, legal, finance, healthcare, public-sector, and customer-facing use cases.

RAG and Governance

RAG also introduces governance needs. Organizations must decide:

- which sources are allowed

- who can access which knowledge

- how sensitive content is protected

- how answers should cite sources

- how hallucinations are detected

- how users report poor answers

- how content is updated

- who owns the knowledge base

- how the system is monitored

Without governance, a RAG system may become another unreliable information channel.

Moving From Chatbot to Knowledge Assistant

A generic chatbot answers from general patterns. A RAG-powered knowledge assistant answers from selected organizational sources. That shift matters.

A chatbot may help draft an HR policy. A knowledge assistant can answer questions based on the approved HR policy. A chatbot may explain what customer churn means. A grounded assistant can help analyze churn using the company’s definitions, data, and reports. A chatbot may provide generic compliance advice. A RAG system can retrieve relevant internal compliance documents and summarize them with source references. This is the move from generic AI to business AI.

Common RAG Mistakes

Organizations should avoid several mistakes.

First, do not assume uploading documents is enough. Retrieval quality matters. Second, do not ignore document governance. Outdated or conflicting documents reduce trust. Third, do not treat RAG answers as automatically correct. Grounding improves reliability but does not eliminate errors. Fourth, do not forget access control. AI systems must respect permissions. Fifth, do not build RAG without user training. Users need to understand how to ask questions and verify answers.

Final Thought

RAG matters because organizations do not need AI that only sounds smart. They need AI that works with trusted business knowledge. Generic chatbots are useful for many tasks, but they are not enough for serious organizational knowledge work. Business AI needs grounding, retrieval, governance, and source awareness. RAG is one of the practical foundations for that shift. It helps organizations move from “AI that guesses” to “AI that retrieves, cites, and supports better decisions.” For any organization planning internal AI assistants, policy Q&A, knowledge systems, decision support, or agentic workflows, RAG is not optional knowledge. It is a core concept.